Social media platforms, such as Facebook and Twitter, provide people with a lot of information, but it’s getting harder and harder to tell what’s real and what’s not.

Researchers at the University of Washington wanted to know how people investigated potentially suspicious posts on their own feeds. The team watched 25 participants scroll through their Facebook or Twitter feeds while, unbeknownst to them, a Google Chrome extension randomly added debunked content on top of some of the real posts. Participants had various reactions to encountering a fake post: Some outright ignored it, some took it at face value, some investigated whether it was true, and some were suspicious of it but then chose to ignore it. These results have been accepted to the 2020 ACM CHI conference on Human Factors in Computing Systems.

“We wanted to understand what people do when they encounter fake news or misinformation in their feeds. Do they notice it? What do they do about it?” said senior author Franziska Roesner, a UW associate professor in the Paul G. Allen School of Computer Science & Engineering. “There are a lot of people who are trying to be good consumers of information and they’re struggling. If we can understand what these people are doing, we might be able to design tools that can help them.”

Previous research on how people interact with misinformation asked participants to examine content from a researcher-created account, not from someone they chose to follow.

“That might make people automatically suspicious,” said lead author Christine Geeng, a UW doctoral student in the Allen School. “We made sure that all the posts looked like they came from people that our participants followed.”

The researchers recruited participants ages 18 to 74 from across the Seattle area, explaining that the team was interested in seeing how people use social media. Participants used Twitter or Facebook at least once a week and often used the social media platforms on a laptop.

Then the team developed a Chrome extension that would randomly add fake posts or memes that had been debunked by the fact-checking website Snopes.com on top of real posts to make it temporarily appear they were being shared by people on participants’ feeds. So instead of seeing a cousin’s post about a recent vacation, a participant would see their cousin share one of the fake stories instead.

The researchers either installed the extension on the participant’s laptop or the participant logged into their accounts on the researcher’s laptop, which had the extension enabled. The team told the participants that the extension would modify their feeds — the researchers did not say how — and would track their likes and shares during the study — though, in fact, it wasn’t tracking anything. The extension was removed from participants’ laptops at the end of the study.

“We’d have them scroll through their feeds with the extension active,” Geeng said. “I told them to think aloud about what they were doing or what they would do if they were in a situation without me in the room. So then people would talk about ‘Oh yeah, I would read this article,’ or ‘I would skip this.’ Sometimes I would ask questions like, ‘Why are you skipping this? Why would you like that?'”

Participants could not actually like or share the fake posts. On Twitter, a “retweet” would share the real content beneath the fake post. The one time a participant did retweet content under the fake post, the researchers helped them undo it after the study was over. On Facebook, the like and share buttons didn’t work at all.

After the participants encountered all the fake posts — nine for Facebook and seven for Twitter — the researchers stopped the study and explained what was going on.

“It wasn’t like we said, ‘Hey, there were some fake posts in there.’ We said, ‘It’s hard to spot misinformation. Here were all the fake posts you just saw. These were fake, and your friends did not really post them,'” Geeng said. “Our goal was not to trick participants or to make them feel exposed. We wanted to normalize the difficulty of determining what’s fake and what’s not.”

The researchers concluded the interview by asking participants to share what types of strategies they use to detect misinformation.

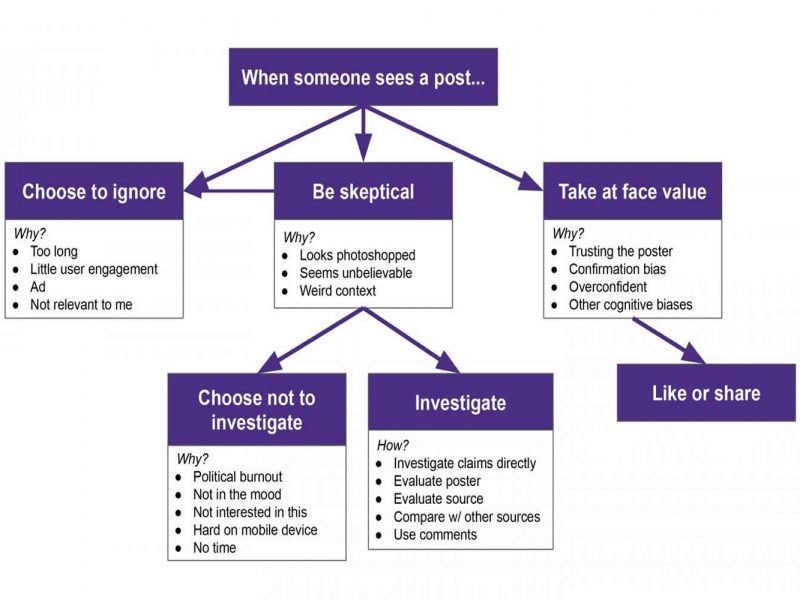

In general, the researchers found that participants ignored many posts, especially those they deemed too long, overly political or not relevant to them.

But certain types of posts made participants skeptical. For example, people noticed when a post didn’t match someone’s usual content. Sometimes participants investigated suspicious posts — by looking at who posted it, evaluating the content’s source or reading the comments below the post — and other times, people just scrolled past them.

“I am interested in the times that people are skeptical but then choose not to investigate. Do they still incorporate it into their worldviews somehow?” Roesner said. “At the time someone might say, ‘That’s an ad. I’m going to ignore it.’ But then later do they remember something about the content, and forget that it was from an ad they skipped? That’s something we’re trying to study more now.”

While this study was small, it does provide a framework for how people react to misinformation on social media, the team said. Now researchers can use this as a starting point to seek interventions to help people resist misinformation in their feeds.

“Participants had these strong models of what their feeds and the people in their social network were normally like. They noticed when it was weird. And that surprised me a little,” Roesner said. “It’s easy to say we need to build these social media platforms so that people don’t get confused by fake posts. But I think there are opportunities for designers to incorporate people and their understanding of their own networks to design better social media platforms.”